- Blog

- About

- Contact

- Cam350 free crack software

- Download Cashflow 101 And 202

- Vt3 wasapi sample rate

- Abigail barnette the girlfriend pdf

- Nonton One piece episode 799 sub indo 720p

- Azure information protection client for mac

- Blackberry Mep Code Reader Software Free Download

- How can i update a scrap mechanic workshop

- Discord download ubuntu

- Effect house tiktok download

- The sims 3 cc clothes

- Safely download youtube videos

- Zoom app download kostenlos deutsch

- Buy kenneth hagin healing scriptures

- Windows 11 download page

- Garmin mobile xt windows ce 6 0

- #Vt3 wasapi sample rate driver

- #Vt3 wasapi sample rate manual

- #Vt3 wasapi sample rate pro

- #Vt3 wasapi sample rate windows

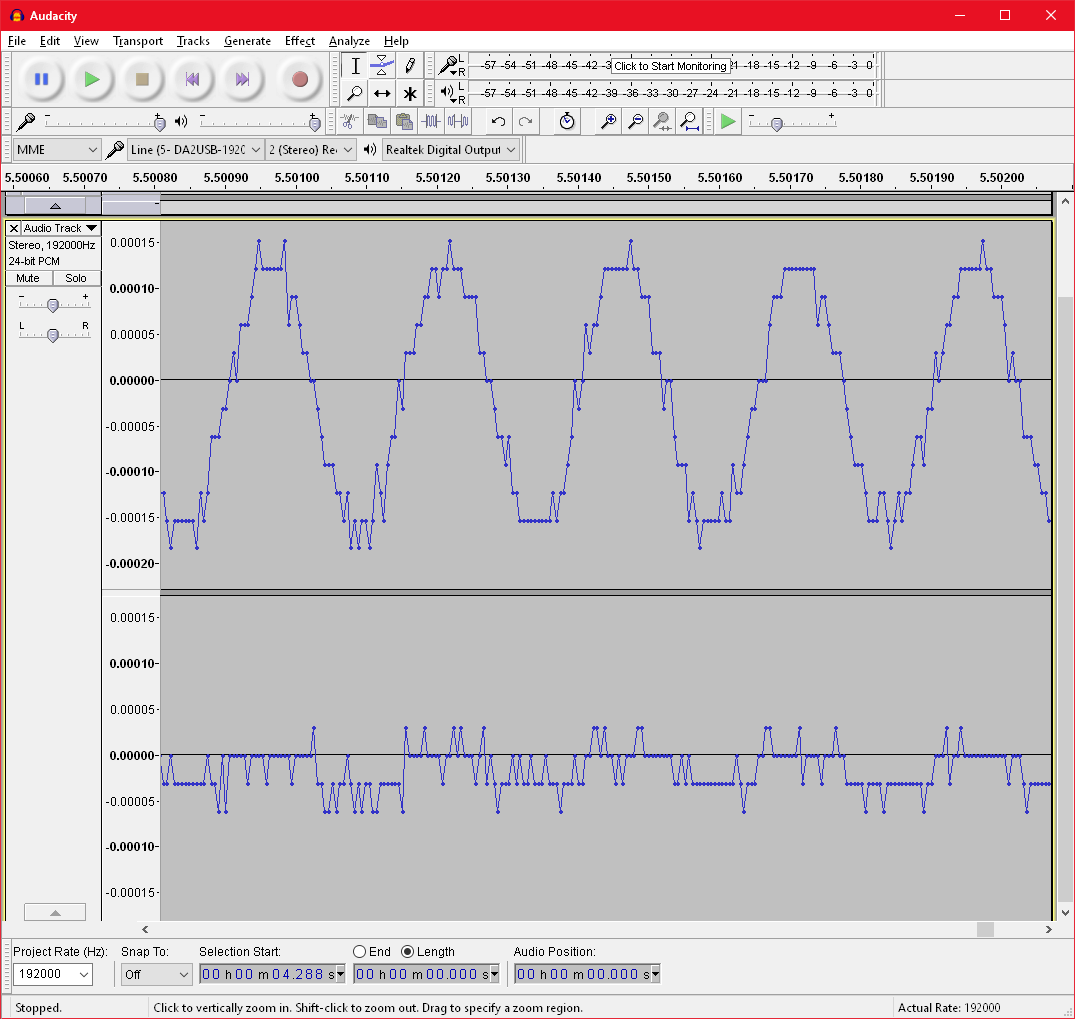

I have had a few Foobar2000 crashes, especially with SIMD instructions enabled, even though my processor support SSE41.īut now everything is working with PCM, SACD and DVD-Audio playback.

#Vt3 wasapi sample rate manual

beta5 plugin (Asio only update) and disabled SIMD instructions and Visualisations, as well as did a manual setup of "end point buffer sizes" in Foobar2000 advanced preferences (according to hardware specs). The stereo perspective is "big" with voices and instuments placed in 3D across stereo perspective.įirst, I installed your official Asio2 1.1 beta2 plugin, but was having issues with playback (Foobar2000 crashes, no automatic shift in sample rate, sample rate locked, etc.). Sound quality is better all over the frequency spectrum compared to the official Asio component, but I especially notice an improvement of sound stage, image, low end and high end clarity and details. Now with the Asio2 1.1 beta5 (see post #2 above), Foobar2000 sound quality is on par with or maybe even a little bit better than MPC-HC.

Google: multichannel directshow asio renderer (version 2.0 is free) I just recently installed your plugin, because I discovered that MPC-HC had better sound quality with the Multichannel Directshow Asio Renderer than Foobar2000 with the official Asio component (same experience as M Z writes about in the post above). Thanks for this high-end WASAP2 & ASIO2 plugin for Foobar2000 :-) Do you see the foo_out_wasap2 plugin in the foobar component config (see attached screenshot) ? If not, can you copy again wasap2.dll in the component folder of your foobar installation ? In that situation, the asio2 plugin cannot use any other endpoint buffer size configured explicitely in the advanced plugin config, but use the returned preferred size instead.Ĭoncerning your third issue: it seems strange.

#Vt3 wasapi sample rate driver

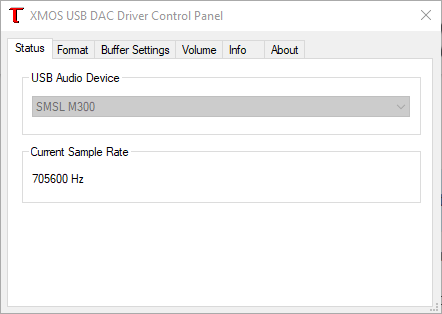

The asio driver returns that it supports only buffer size falling within the interval defined by min and max value, i.e. Renderer::init_asio_static_data - ASIOGetBufferSize (min:544, max:544, preferred:544, granularity:0) This example correctly, a lot of unnecessary memory allocations / deletions.Concerning your 1st issue (sound corrupted while switching to a track with different sample rate): I think that the problem occurs because while initializing the asio driver, I am querying the driver for asio buffer size before setting the driver sample rate according to the track one: give a try to the attached patch: I have change the order and now set the sample rate before querying driver for buffer size.Ĭoncerning your 2nd issue: the limitation comes from your asio driver: you can see it in the log: The third issue is that in order to resample there's a lot of buffer copying that has to happen and, if I understand Media types, and only have a problem when it's actually time to do the resampling (IMFTransform::ProcessOutput is the only part in the process where I ever get an error). The IMFTransform objects (two of them - one for input resampling, one for output resampling) accept both their input and output This is done using MFInitMediaTypeFromWaveFormatEx. That best describes the internal implementation of the DSP pipeline. I've created the media types using the WAVEFORMATEXTENSIBLE structures for the devices and my own custom WAVEFORMATEXTENSIBLE

The second is actually getting the resampler to obey - IMFTransform::ProcessOutput always returns MF_E_TRANSFORM_NEED_MORE_INPUT. Is the correct API for what I'm doing - real time audio processing. Naturally, this confusion makes me wonder if this It's written as if it's asynchronous and sends info toĪ driver somewhere (command queues, locks on the buffers), but it's mentioned several times that all processing is done synchronously, IE within the same thread of my own process (which is what I want). I've run into a few issues - one, I can't find any explanation as to how this API works.

#Vt3 wasapi sample rate windows

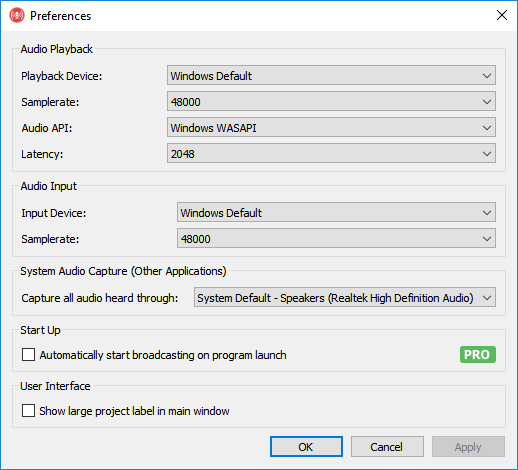

I'm using the IMFTransform object which is a part of the Windows Media Foundation API. I'm trying to use the Audio Resampler DSP API found To compensate, I want to resample the inputĭevice's format to my own format, and then resample that format to the output device's format when all DSP is complete. The internalĪudio DSP pipeline uses a constant format of 48000 kHz stereo floating point, however both the input and output devices can have any variable number of channels, sample rates, and formats (PCM or IEEE_FLOAT). The application's audio stream uses both an input and an output device, each of which are set to use their default formats (retrieved via PKEY_AudioEngine_DeviceFormat).

#Vt3 wasapi sample rate pro

I'm writing a Pro Audio application using exclusive-mode WASAPI.